- Home

- Assessments

- Bioregional Assessment Program

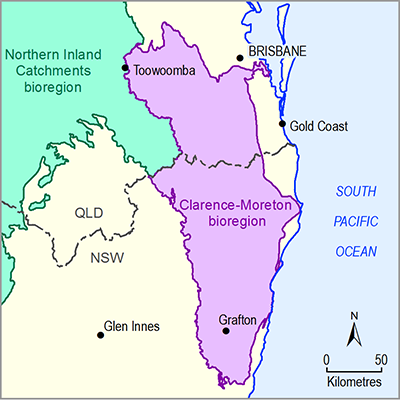

- Clarence-Moreton bioregion

- 2.6.1 Surface water numerical modelling for the Clarence-Moreton bioregion

- 2.6.1.5 Uncertainty

- 2.6.1.5.2 Qualitative uncertainty analysis

The major assumptions and model choices underpinning the Richmond river basin surface water model are listed in Table 6. The goal of the table is to provide a non-technical audience with a systematic overview of the model assumptions, their justification and effect on predictions, as judged by the modelling team. This table will also assist in an open and transparent review of the modelling.

Each assumption is scored on four attributes as ‘low’, ‘medium’ or ‘high’. The data column is the degree to which the question ‘If more or different data were available, would this assumption/choice still have been made?’ would be answered positively. A ‘low’ score means that the assumption is not influenced by data availability, while a ‘high’ score would indicate that this choice would be revisited if more data were available. Closely related is the resources attribute. This column captures the extent to which resources available for the modelling, such as computing resources, personnel and time, influenced this assumption or model choice. A ‘low’ score indicates the same assumption would have been made with unlimited resources, while a ‘high’ score indicates the assumption is driven by resource constraints. The third attribute deals with the technical and computational issues. ‘High’ is assigned to assumptions and model choices that are dominantly driven by computational or technical limitations of the model code. These include issues related to spatial and temporal resolution of the models. The final, and most important, column is the effect of the assumption or model choice on the predictions. This is a qualitative assessment of the modelling team of the extent to which a model choice will affect the model predictions, with ‘low’ indicating a minimal effect and ‘high’ a large effect.

Assumptions are discussed in detail in the sections below Table 6, including the rationale for the scoring.

Table 6 Qualitative uncertainty analysis as used for the Richmond river basin surface water model

2.6.1.5.2.1 Selection of calibration catchments

The parameters that control the transformation of rainfall into streamflow are adjusted based on a comparison of observed and simulated historical streamflow. To calibrate the surface water model, a number of catchments are selected outside the Richmond river basin (e.g. Clarence river basin and Tweed river basin). The parameter combinations that result in objective function values above the predefined threshold are deemed suitable for all catchments in the bioregion.

The selection of calibration catchments is therefore almost solely based on data availability, which results in a ‘medium’ score for this criterion. As it is technically trivial to include more calibration catchments in the calibration procedure and as it would not appreciably change the computing time required, both the resources and technical columns have a ‘low’ score.

This regionalisation of parameters is a widely established technique internationally to predict flows at ungauged catchments (Bourgin et al., 2015). The regionalisation methodology is valid as long as the selected catchments for calibration are comparable in size, climate, land use, topography, geology and geomorphology. The majority of these assumptions can be considered valid (see Section 2.6.1.6) and the effect on predictions is therefore deemed small.

While the regionalisation assumption is valid, the availability of additional calibration catchments may further constrain the predictions. The overall effect of the choice of calibration catchments is therefore considered moderate, which is reflected in the ‘medium’ scoring.

2.6.1.5.2.2 High-flow and low-flow objective function

The AWRA-L model simulates daily streamflow. High-streamflow and low-streamflow conditions are governed by different aspects of the hydrological system. It has proven to be very difficult for any rainfall-runoff code to find parameter sets that are able to adequately simulate both extremes of the hydrograph. In recognition of this issue, two objective functions are chosen, one tailored to medium and high flows and another tailored to low flows.

Even with more calibration catchments and more time available for calibration, a high and low flow objective would still be necessary to find parameter sets suited to simulate different aspects of the hydrograph. Data and resources are therefore scored ‘low’, while the technical criterion is scored ‘high’.

The high-streamflow objective function is a weighted sum of the Nash–Sutcliffe efficiency (E) and the bias. The former is most sensitive to differences in simulated and observed daily streamflow, while the latter is most affected by the discrepancy between long-term observed and simulated streamflow (Pushpalatha et al., 2012). The weighting of both components represents the trade-off between simulating daily and mean annual streamflow behaviour.

The low-streamflow objective is achieved by transforming the observed and simulated streamflow through a Box-Cox transformation (see Section 2.6.1.4). By this transformation, a small number of large discrepancies in high streamflow will have less prominence in the objective function than a large number of small discrepancies in low streamflow. Like the high-streamflow objective function, the low-streamflow objective function consists of two components, the E transformed by a Box-Cox power of 0.1 and bias, which again represent the trade-off between daily and mean annual accuracy.

The choice of the weights between both terms in both objective functions is based on the experience of the modelling team (Viney et al., 2009). The choice is not constrained by data, technical issues or available resources. While different choices of the weights will result in a different set of optimised parameter values, experience in the Water Information Research and Development Alliance (WIRADA) project in which the AWRA-L is calibrated on a continental scale has shown the calibration to be fairly robust against the weights in the objective function (Vaze et al., 2013).

Within the bioregional assessment the effect of this choice is further mitigated through the Approximate Bayesian Computation process in which hydrological response variable specific summary statistics are used to select behavioural posterior parameter distributions.

While the selection of objective functions and their weights is a crucial step in the surface water modelling process, the overall effect on the predictions is marginal through the uncertainty analysis, hence the ‘low’ scoring.

2.6.1.5.2.3 Selection of summary statistics for uncertainty analysis

The summary statistic in the Approximate Bayesian Computation process has a very similar role to the objective function in calibration. Where the calibration focuses on identifying a single parameter set that provides an overall good fit between observed and simulated values, the uncertainty analysis aims to select an ensemble of parameter combinations that is best suited to make the chosen prediction.

Within the context of the bioregional assessment, the calibration aims at providing a parameter set that performs well at a daily resolution, while the uncertainty analysis focuses on hydrological response variables that summarise specific aspects of the yearly hydrograph.

The summary statistic is the mean of the E of the observed versus simulated hydrological response variable values at the calibration catchments that contribute to flow in the Richmond river basin. This ensures parameter combinations are chosen that are able to simulate the specific part of the hydrograph relevant to the hydrological response variable, at a local scale. There are other ways to summarise the difference between observed and simulated values. The current summary statistic, based on the mean of Nash–Sutcliffe efficiencies across catchments, is chosen because it provides a fair, unbiased estimate of the model mismatch and is fairly robust against extreme outliers.

Like the objective function selection, the choice of summary statistic is primarily guided by the predictions and to a much lesser extent by the available data, technical issues or resources. This is the reason for the ‘low’ score.

2.6.1.5.2.4 Selection of acceptance threshold for uncertainty analysis

The acceptance threshold ideally is independently defined based on an analysis of the system (see companion submethodology M09 (as listed in Table 1) for propagating uncertainty through models (Peeters et al., 2016)). For the surface water hydrological response variables such an independent threshold definition can be based on the observation uncertainty, which depends on an analysis of the rating curves for each observation gauging station as well as at the model nodes. There is limited rating curve data available, hence the ‘medium’ score. Even if this information were to be available the operational constraints within the bioregional assessment prevents such a detailed analysis, although it is technically feasible. The resources column therefore receives a ‘high’ score while the technical column receives a ‘low’ score.

The choice of setting the acceptance threshold equal to the 75th percentile of the summary statistic for a particular hydrological response variable is a subjective decision made by the Assessment team. It does ensure, however, that the parameter combinations accepted in the posterior parameter distribution match the observed hydrological response variables at least as well as the top 25% of the design of experiment runs. By varying this threshold through a trial-and-error procedure in the testing phase of the uncertainty analysis methodology, the modelling team learned that this threshold is an acceptable trade-off between overfitting the observation and constraining the priors. While relaxing the threshold will lead to larger uncertainty intervals for the predictions, the median predicted values are considered robust to this change. A formal test of this hypothesis has not yet been carried out. The effect on predictions is therefore scored ‘medium’.

2.6.1.5.2.5 Interaction with the groundwater model

The coupling between the results of the groundwater models and the surface water model, described in the model sequence section (Section 2.6.1.1), represents a pragmatic solution to account for surface water – groundwater interactions at a regional scale. Like the majority of rainfall-runoff models, the current version of AWRA-L does not allow an integrated exchange of groundwater-related fluxes during runtime. Even if this capability were available, the differences in spatial and temporal resolution would require non-trivial upscaling and downscaling processes of spatio-temporal distributions of fluxes. The choice of the coupling methodology is therefore mostly a technical choice, hence the ‘high’ score for this attribute. The data and resources columns are scored ‘medium’ as even when it is technically possible to couple both models in an integrated fashion, the implementation would be constrained by the available data and the operational constraints. This warrants the ‘medium’ score for both resources and data.

The integration of a change in baseflow from the groundwater model into AWRA-L does mean that the overall water balance is no longer closed in AWRA-L. This method of coupling both models is therefore only valid if the exchange flux is small compared to the other components of the water balance. The exchange flux (see companion product 2.5 for the Clarence-Moreton bioregion (Cui et al., 2016)) shows that for the Richmond river basin the change in baseflow under baseline and under the CRDP is much smaller than the other components of the surface water balance, and hence the overall effect on the predictions is assumed to be small. The effect on predictions is scored ‘medium’ as a change in hydrology is only possible via a change in the surface water – groundwater interaction.

2.6.1.5.2.6 No streamflow routing

Streamflow routing is not taken into account in the Richmond river basin as the surface water storages are relatively small (see companion product 1.5 for the Clarence-Moreton bioregion (Rassam et al., 2014)). Thus, the effects of regulation on the system are sufficiently small that lags in streamflow due to routing do not need to be taken into account. The effect of not incorporating routing is expected to be minimal on the prediction. Seeing the small potential for impact, resourcing the development of a river routing model for this region was not warranted.

Only the data column is scored ‘medium’ as there is limited information on dams in the bioregion. All other attributes are scored ‘low’ as it is technically feasible and within the operational constraints of the bioregional assessments to carry out streamflow routing. Doing so would only minimally affect the predictions, hence the ‘low’ score.

Product Finalisation date

- 2.6.1.1 Methods

- 2.6.1.2 Review of existing models

- 2.6.1.3 Model development

- 2.6.1.4 Calibration

- 2.6.1.5 Uncertainty

- 2.6.1.6 Prediction

- 2.6.1.6.1 Annual flow (AF)

- 2.6.1.6.2 Interquartile range (IQR)

- 2.6.1.6.3 Daily streamflow at the 99th percentile (P99)

- 2.6.1.6.4 Flood (high-flow) days (FD)

- 2.6.1.6.5 Daily streamflow at the 1st percentile (P01)

- 2.6.1.6.6 Low-flow days (LFD)

- 2.6.1.6.7 Low-flow spells (LFS)

- 2.6.1.6.8 Longest low-flow spell (LLFS)

- 2.6.1.6.9 Zero-flow days (ZFD)

- 2.6.1.6.10 Summary and conclusions

- References

- Datasets

- Citation

- Contributors to the Technical Programme

- Acknowledgements

- About this technical product